Buying Verified Lists vs Scraping Targets Results What the Data Shows

GSA SER Verified Lists Vs Scraping

Understanding the Core Concepts

The world of automated link building often revolves around efficiency and source quality. For GSA Search Engine Ranker users, the debate between using click here pre-tested resources and generating fresh targets is constant. The phrase GSA SER verified lists vs scraping encapsulates a fundamental strategic choice. One method relies on curated, pre-approved databases of URLs, while the other dynamically harvests new opportunities from search engines in real time.

The Case for Verified Lists

Instant Success Rates and Time Savings

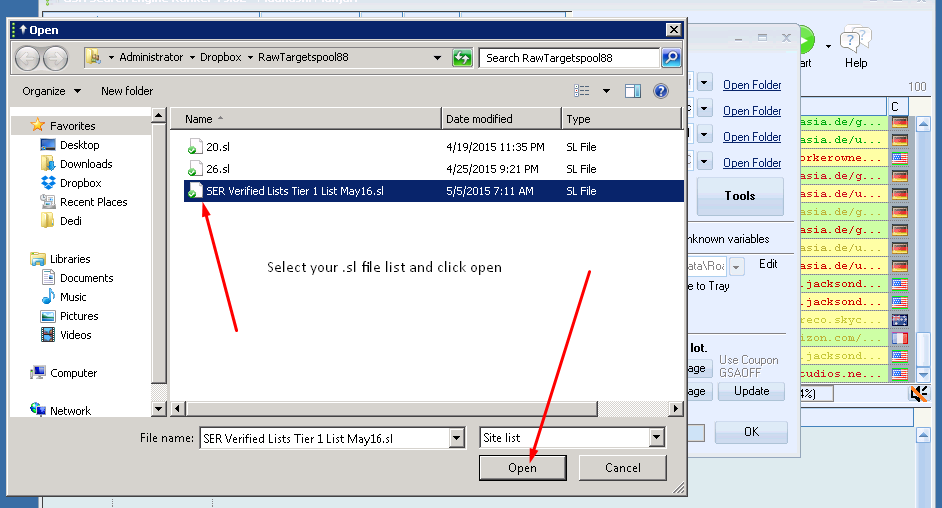

Verified lists are pre-scanned collections of platforms known to accept registrations, guestbooks, or comments. They are typically sold or shared within communities and come with metadata about engine type, platform, and success probability. The primary advantage is the immediate reduction in wasted attempts. Since these URLs have already passed a submission test, your software skips the discovery and verification phase entirely, leading to a higher links-per-minute ratio.

Predictable Resources and Niche Control

Another strength lies in knowing exactly what you are getting. Lists can be filtered by platform (WordPress, Drupal, etc.), language, or PageRank before a campaign even starts. This allows for targeting specific footprints without burning proxies or bandwidth on random discovery. For users building multiple projects in the same niche, a high-quality verified list becomes a reusable asset.

The Case for Scraping

The Power of Fresh, Unspammed URLs

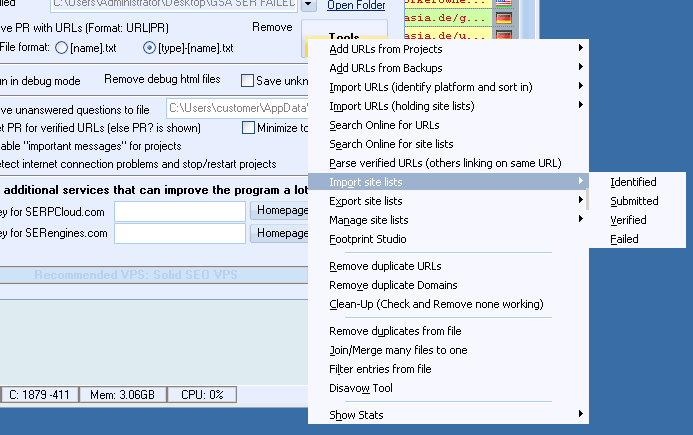

Scraping refers to instructing GSA SER to query search engines like Google, Bing, or Yahoo using target keywords and footprint strings. The tool then harvests potentially millions of new URLs. The greatest benefit here is tapping into targets that have never appeared in any public list. Many high-value, low-competition platforms remain invisible unless you scrape for them yourself, avoiding the footprint of a saturated list that thousands of other SEOs are also hitting.

Customization and Contextual Relevance

When you scrape, you can inject niche-specific keywords directly into the search queries. This generates a stream of targets contextually aligned with your money site, something a generic verified list can never achieve. The ability to dynamically search for "powered by wordpress + your_keyword" creates relevance signals that pre-packaged lists lack entirely.

Direct Comparison: Verified Lists vs Scraping

Ratio of Verified to Submitted Links

In the GSA SER verified lists vs scraping matchup, the verified approach often shows a staggeringly high verification rate—sometimes exceeding 80%—because the targets are pre-approved. Scraping, on the other hand, might see a low single-digit verification rate, as most harvested URLs will be closed, spam-blocked, or incompatible. However, the sheer volume from scraping can still produce a larger absolute number of live links if configured correctly.

Proxy and Resource Consumption

Verified lists are extremely conservative regarding proxies and threading. Since the software doesn't need to perform deep search queries or parse SERPs, it can operate on a minimal proxy supply. Scraping is resource-intensive; it burns through search engine queries rapidly, requiring a large pool of high-quality private proxies to avoid IP bans, which directly increases operational costs.

Longevity and Footprint Risks

Public verified lists carry a hidden danger: uniformity. When a list is used by hundreds of marketers, the platforms eventually become spammed to death, and search engines may devalue links from these known automated targets. Scraping carves out a unique backlink profile, but it also risks hitting honeypots or generating an unnatural pattern if the search queries are not rotated intelligently.

Crafting a Hybrid Strategy

Mixing Both for Optimal Campaigns

The most sophisticated GSA SER users rarely choose one side exclusively. A common blueprint is to start a project with scraped, keyword-rich targets to build a unique, contextual foundation. Then, layer in verified lists to rapidly boost quantity and diversify platform types. The interplay of the two methods offsets the weaknesses: scraping adds freshness and relevance, while verified lists add consistency and speed.

Identifying Quality in Either Source

Whether you lean toward a purchased list or your own scraping engine, filtering remains the unsung hero. Using GSA SER’s global site lists, skip lists, and extensive filtering rules transforms both raw scraped data and bulk verified lists into cleaner, safer target pools. Without robust post-processing, both approaches will tank your verified rate and risk damaging your site’s standing.

Making the Decision for Your Project

Choosing between GSA SER verified lists vs scraping depends entirely on your campaign goals, budget, and tolerance for noise. If you need fast, guaranteed links for tier 2 or 3 properties and have a tight proxy budget, a top-tier verified list is indispensable. If you are building a tier 1 buffer site or require extreme niche relevance across hundreds of thousands of links, investing time and resources into advanced scraping is the superior path. Ultimately, understanding the mechanics behind both ensures you can deploy each technique where it delivers the highest return.